# **日志中心**

## 日志處理流程

需要依賴log-core

此處修改

```

<springProperty name="LOG_FILE" scope="context" source="logging.file" defaultValue="/logs/${APP_NAME}"/>

```

在部署微服務的機器中執行

mkdir /logs

chmod -R 777 /logs

日志中心展現elk抽取的數據

啟動 log-center ,之前需要部署 ELK+Filebeat

# elasticearch安裝

```

mkdir /app

cd /app

wget https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-6.5.4.tar.gz

tar -zxvf elasticsearch-6.5.4.tar.gz

useradd es

修改config/jvm.options為內存的一半大小

vi config/jvm.options

-Xms512m

-Xmx512m

修改 max file 和 max virtual memory 參數

用root 或 sudo 用戶

vi /etc/sysctl.conf

添加下面配置:

vm.max_map_count=655360

并執行命令:

sysctl -p

grep -q "* - nofile" /etc/security/limits.conf || cat >> /etc/security/limits.conf << EOF

########################################

nofile 1048576

nproc 65536

stack 65536

EOF

grep -q "ulimit -n" /etc/profile || cat >> /etc/profile << EOF

########################################

ulimit -n 1048576

ulimit -u 65536

ulimit -s 65536

EOF

vi /app/elasticsearch-6.5.4/config/elasticsearch.yml

cluster.name: elasticsearch

node.name: node-1

network.host: 0.0.0.0

http.port: 9200

node.max_local_storage_nodes: 2

http.cors.enabled: true

http.cors.allow-origin: "*"

chown -R es:es /app/elasticsearch-6.5.4/

su - es -c '/app/elasticsearch-6.5.4/bin/elasticsearch -d'

```

# logstash 安裝配置

```

cd /app

wget https://artifacts.elastic.co/downloads/logstash/logstash-6.5.4.tar.gz

tar -zxvf logstash-6.5.4.tar.gz

cd logstash-6.5.4/

ls

cd bin

vi logstash.conf

```

# logstash.conf如下

```

input {

beats {

port => 5044

}

}

filter {

if [fields][docType] == "sys-log" {

grok {

match => { "message" => "\[%{NOTSPACE:appName}\:%{NOTSPACE:serverIp}\:%{NOTSPACE:serverPort}\] %{TIMESTAMP_ISO8601:logTime} %{LOGLEVEL:logLevel} %{WORD:pid} \[%{MYTHREADNAME:threadName}\] %{NOTSPACE:classname} %{GREEDYDATA:message}" }

overwrite => ["message"]

}

date {

match => ["logTime","yyyy-MM-dd HH:mm:ss.SSS"]

}

date {

match => ["logTime","yyyy-MM-dd HH:mm:ss.SSS"]

target => "timestamp"

}

mutate {

remove_field => "logTime"

remove_field => "@version"

remove_field => "host"

remove_field => "offset"

}

}

if [fields][docType] == "point-log" {

grok {

patterns_dir => ["/app/logstash-6.5.4/patterns"]

match => {

"message" => "%{TIMESTAMP_ISO8601:timestamp}\|%{MYAPPNAME:appName}\|%{WORD:resouceid}\|%{MYAPPNAME:type}\|%{GREEDYDATA:object}"

}

}

kv {

source => "object"

field_split => "&"

value_split => "="

}

}

}

output {

if [fields][docType] == "sys-log" {

elasticsearch {

hosts => ["35.192.230.214:9200"]

manage_template => false

index => "ocp-log-%{+YYYY.MM.dd}"

document_type => "%{[@metadata][type]}"

}

}

if [fields][docType] == "point-log" {

elasticsearch {

hosts => ["35.192.230.214:9200"]

manage_template => false

index => "point-log-%{+YYYY.MM.dd}"

document_type => "%{[@metadata][type]}"

}

}

}

```

## 在Logstash中使用grok

~~~

mkdir -p /app/logstash-6.5.4/patterns

cd /app/logstash-6.5.4/patterns

vi java

# user-center

MYAPPNAME ([0-9a-zA-Z_-]*)

MYTHREADNAME ([0-9a-zA-Z._-]|\(|\)|\s)*

ls

nohup ./logstash -f logstash.conf >&/dev/null &

~~~

# filebeat

filebeat(收集、聚合) ->logstash(過濾結構化) -> ES

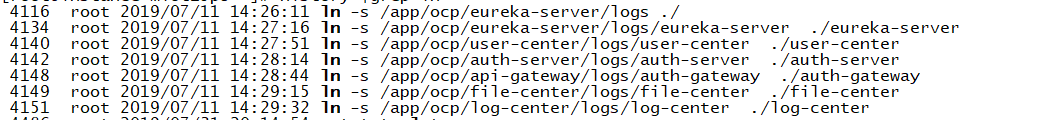

filebeat 抽取的是/logs/*/*.log的日志,可以建立軟連接,將不同模塊的日志都方式/logs下面

```

cd /app

wget https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-6.5.4-linux-x86_64.tar.gz

tar -zxvf filebeat-6.5.4-linux-x86_64.tar.gz

cd /app/filebeat-6.5.4-linux-x86_64

vi filebeat.yml

# filebeat.yml配置

`###################### Filebeat Configuration Example #########################

# This file is an example configuration file highlighting only the most common

# options. The filebeat.reference.yml file from the same directory contains all the

# supported options with more comments. You can use it as a reference.

#

# You can find the full configuration reference here:

# https://www.elastic.co/guide/en/beats/filebeat/index.html

# For more available modules and options, please see the filebeat.reference.yml sample

# configuration file.

#=========================== Filebeat inputs =============================

filebeat.inputs:

# Each - is an input. Most options can be set at the input level, so

# you can use different inputs for various configurations.

# Below are the input specific configurations.

- type: log

enabled: true

paths:

#- /var/log/*.log

- /logs/*/*.log

exclude_lines: ['^DEBUG']

##增加字段

fields:

docType: sys-log

project: open-capacity-platform

#聚合日志

multiline:

pattern: '^\[\S+ - \S+ - \d{2,}] '

negate: true

match: after

# timeout: 5s

- type: log

enabled: true

paths:

- /logs/point/*.log

fields:

docType: point-log

project: open-capacity-platform

# Exclude lines. A list of regular expressions to match. It drops the lines that are

# matching any regular expression from the list.

#exclude_lines: ['^DBG']

# Include lines. A list of regular expressions to match. It exports the lines that are

# matching any regular expression from the list.

#include_lines: ['^ERR', '^WARN']

# Exclude files. A list of regular expressions to match. Filebeat drops the files that

# are matching any regular expression from the list. By default, no files are dropped.

#exclude_files: ['.gz$']

# Optional additional fields. These fields can be freely picked

# to add additional information to the crawled log files for filtering

#fields:

# level: debug

# review: 1

### Multiline options

# Multiline can be used for log messages spanning multiple lines. This is common

# for Java Stack Traces or C-Line Continuation

# The regexp Pattern that has to be matched. The example pattern matches all lines starting with [

#multiline.pattern: ^\[

# Defines if the pattern set under pattern should be negated or not. Default is false.

#multiline.negate: false

# Match can be set to "after" or "before". It is used to define if lines should be append to a pattern

# that was (not) matched before or after or as long as a pattern is not matched based on negate.

# Note: After is the equivalent to previous and before is the equivalent to to next in Logstash

#multiline.match: after

#============================= Filebeat modules ===============================

filebeat.config.modules:

# Glob pattern for configuration loading

path: ${path.config}/modules.d/*.yml

# Set to true to enable config reloading

reload.enabled: false

# Period on which files under path should be checked for changes

#reload.period: 10s

#==================== Elasticsearch template setting ==========================

setup.template.settings:

index.number_of_shards: 3

setup.template.name: "filebeat"

setup.template.pattern: "filebeat-*"

#index.codec: best_compression

#_source.enabled: false

#================================ General =====================================

# The name of the shipper that publishes the network data. It can be used to group

# all the transactions sent by a single shipper in the web interface.

#name:

# The tags of the shipper are included in their own field with each

# transaction published.

#tags: ["service-X", "web-tier"]

# Optional fields that you can specify to add additional information to the

# output.

#fields:

# env: staging

#============================== Dashboards =====================================

# These settings control loading the sample dashboards to the Kibana index. Loading

# the dashboards is disabled by default and can be enabled either by setting the

# options here, or by using the `-setup` CLI flag or the `setup` command.

#setup.dashboards.enabled: false

# The URL from where to download the dashboards archive. By default this URL

# has a value which is computed based on the Beat name and version. For released

# versions, this URL points to the dashboard archive on the artifacts.elastic.co

# website.

#setup.dashboards.url:

#============================== Kibana =====================================

# Starting with Beats version 6.0.0, the dashboards are loaded via the Kibana API.

# This requires a Kibana endpoint configuration.

setup.kibana:

# Kibana Host

# Scheme and port can be left out and will be set to the default (http and 5601)

# In case you specify and additional path, the scheme is required: http://localhost:5601/path

# IPv6 addresses should always be defined as: https://[2001:db8::1]:5601

#host: "localhost:5601"

# Kibana Space ID

# ID of the Kibana Space into which the dashboards should be loaded. By default,

# the Default Space will be used.

#space.id:

#============================= Elastic Cloud ==================================

# These settings simplify using filebeat with the Elastic Cloud (https://cloud.elastic.co/).

# The cloud.id setting overwrites the `output.elasticsearch.hosts` and

# `setup.kibana.host` options.

# You can find the `cloud.id` in the Elastic Cloud web UI.

#cloud.id:

# The cloud.auth setting overwrites the `output.elasticsearch.username` and

# `output.elasticsearch.password` settings. The format is `<user>:<pass>`.

#cloud.auth:

#================================ Outputs =====================================

# Configure what output to use when sending the data collected by the beat.

#-------------------------- Elasticsearch output ------------------------------

#output.elasticsearch:

# Array of hosts to connect to.

# hosts: ["192.168.28.130:9200"]

# index: "filebeat-log"

# Optional protocol and basic auth credentials.

#protocol: "https"

#username: "elastic"

#password: "changeme"

#----------------------------- Logstash output --------------------------------

output.logstash:

# The Logstash hosts

hosts: ["127.0.0.1:5044"]

bulk_max_size: 2048

# Optional SSL. By default is off.

# List of root certificates for HTTPS server verifications

#ssl.certificate_authorities: ["/etc/pki/root/ca.pem"]

# Certificate for SSL client authentication

#ssl.certificate: "/etc/pki/client/cert.pem"

# Client Certificate Key

#ssl.key: "/etc/pki/client/cert.key"

#================================ Procesors =====================================

# Configure processors to enhance or manipulate events generated by the beat.

processors:

- add_host_metadata: ~

- add_cloud_metadata: ~

#================================ Logging =====================================

# Sets log level. The default log level is info.

# Available log levels are: error, warning, info, debug

#logging.level: debug

# At debug level, you can selectively enable logging only for some components.

# To enable all selectors use ["*"]. Examples of other selectors are "beat",

# "publish", "service".

#logging.selectors: ["*"]

#============================== Xpack Monitoring ===============================

# filebeat can export internal metrics to a central Elasticsearch monitoring

# cluster. This requires xpack monitoring to be enabled in Elasticsearch. The

# reporting is disabled by default.

# Set to true to enable the monitoring reporter.

#xpack.monitoring.enabled: false

# Uncomment to send the metrics to Elasticsearch. Most settings from the

# Elasticsearch output are accepted here as well. Any setting that is not set is

# automatically inherited from the Elasticsearch output configuration, so if you

# have the Elasticsearch output configured, you can simply uncomment the

# following line.

#xpack.monitoring.elasticsearch:

```

啟動

nohup ./filebeat -e -c filebeat.yml >&/dev/null &

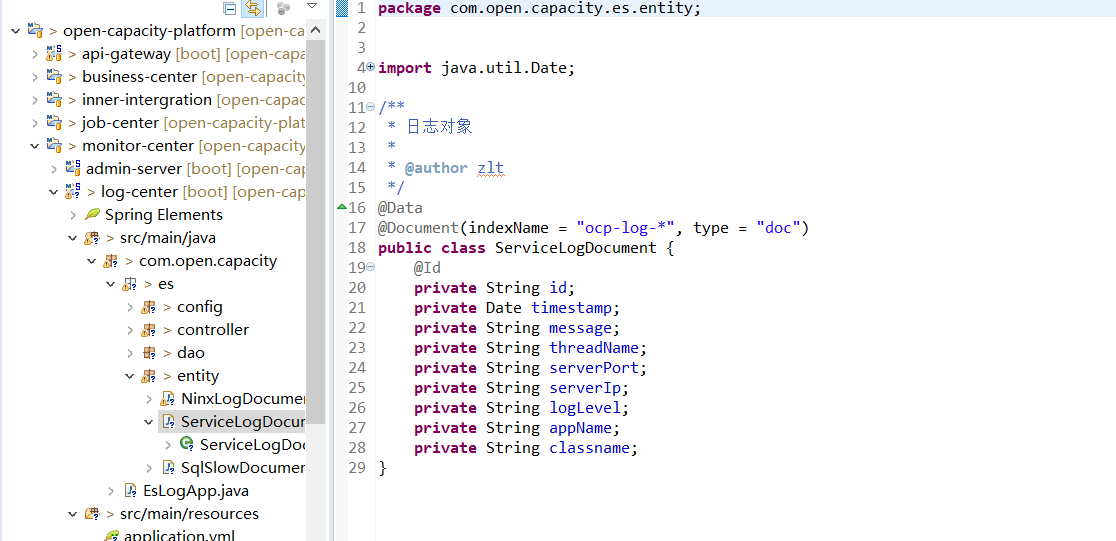

結構化日志數據為以下格式存在ES中

```

{

"timestamp": "時間",

"message": "具體日志信息",

"threadName": "線程名",

"serverPort": "服務端口",

"serverIp": "服務ip",

"logLevel": "日志級別",

"appName": "工程名稱",

"classname": "類名"

}

```

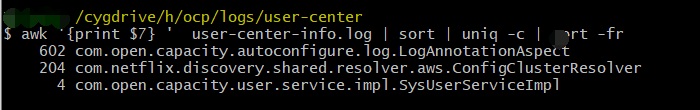

linux統計調用次數

```

awk '{print $7} ' user-center-info.log | sort | uniq -c | sort -fr

```

- 前言

- 1.項目說明

- 2.項目更新日志

- 3.文檔更新日志

- 01.快速開始

- 01.maven構建項目

- 02.環境安裝

- 03.STS項目導入

- 03.IDEA項目導入

- 04.數據初始化

- 05.項目啟動

- 06.付費文檔說明

- 02.總體流程

- 1.oauth接口

- 2.架構設計圖

- 3.微服務介紹

- 4.功能介紹

- 5.梳理流程

- 03.模塊詳解

- 01.老版本1.0.1分支模塊講解

- 01.db-core模塊

- 02.api-commons模塊

- 03.log-core模塊

- 04.security-core模塊

- 05.swagger-core模塊

- 06.eureka-server模塊

- 07.auth-server模塊

- 08.auth-sso模塊解析

- 09.user-center模塊

- 10.api-gateway模塊

- 11.file-center模塊

- 12.log-center模塊

- 13.batch-center模塊

- 14.back-center模塊

- 02.spring-boot-starter-web那點事

- 03.自定義db-spring-boot-starter

- 04.自定義log-spring-boot-starter

- 05.自定義redis-spring-boot-starter

- 06.自定義common-spring-boot-starter

- 07.自定義swagger-spring-boot-starter

- 08.自定義uaa-server-spring-boot-starter

- 09.自定義uaa-client-spring-boot-starter

- 10.自定義ribbon-spring-boot-starter

- 11.springboot啟動原理

- 12.eureka-server模塊

- 13.auth-server模塊

- 14.user-center模塊

- 15.api-gateway模塊

- 16.file-center模塊

- 17.log-center模塊

- 18.back-center模塊

- 19.auth-sso模塊

- 20.admin-server模塊

- 21.zipkin-center模塊

- 22.job-center模塊

- 23.batch-center

- 04.全新網關

- 01.基于spring cloud gateway的new-api-gateway

- 02.spring cloud gateway整合Spring Security Oauth

- 03.基于spring cloud gateway的redis動態路由

- 04.spring cloud gateway聚合swagger文檔

- 05.技術詳解

- 01.互聯網系統設計原則

- 02.系統冪等性設計與實踐

- 03.Oauth最簡向導開發指南

- 04.oauth jdbc持久化策略

- 05.JWT token方式啟用

- 06.token有效期的處理

- 07.@PreAuthorize注解分析

- 08.獲取當前用戶信息

- 09.認證授權白名單配置

- 10.OCP權限設計

- 11.服務安全流程

- 12.認證授權詳解

- 13.驗證碼技術

- 14.短信驗證碼登錄

- 15.動態數據源配置

- 16.分頁插件使用

- 17.緩存擊穿

- 18.分布式主鍵生成策略

- 19.分布式定時任務

- 20.分布式鎖

- 21.網關多維度限流

- 22.跨域處理

- 23.容錯限流

- 24.應用訪問次數控制

- 25.統一業務異常處理

- 26.日志埋點

- 27.GPRC內部通信

- 28.服務間調用

- 29.ribbon負載均衡

- 30.微服務分布式跟蹤

- 31.異步與線程傳遞變量

- 32.死信隊列延時消息

- 33.單元測試用例

- 34.Greenwich.RELEASE升級

- 35.混沌工程質量保證

- 06.開發初探

- 1.開發技巧

- 2.crud例子

- 3.新建服務

- 4.區分前后臺用戶

- 07.分表分庫

- 08.分布式事務

- 1.Seata介紹

- 2.Seata部署

- 09.shell部署

- 01.eureka-server

- 02.user-center

- 03.auth-server

- 04.api-gateway

- 05.file-center

- 06.log-center

- 07.back-center

- 08.編寫shell腳本

- 09.集群shell部署

- 10.集群shell啟動

- 11.部署阿里云問題

- 10.網關安全

- 1.openresty https保障服務安全

- 2.openresty WAF應用防火墻

- 3.openresty 高可用

- 11.docker配置

- 01.docker安裝

- 02.Docker 開啟遠程API

- 03.采用docker方式打包到服務器

- 04.docker創建mysql

- 05.docker網絡原理

- 06.docker實戰

- 6.01.安裝docker

- 6.02.管理鏡像基本命令

- 6.03.容器管理

- 6.04容器數據持久化

- 6.05網絡模式

- 6.06.Dockerfile

- 6.07.harbor部署

- 6.08.使用自定義鏡像

- 12.統一監控中心

- 01.spring boot admin監控

- 02.Arthas診斷利器

- 03.nginx監控(filebeat+es+grafana)

- 04.Prometheus監控

- 05.redis監控(redis+prometheus+grafana)

- 06.mysql監控(mysqld_exporter+prometheus+grafana)

- 07.elasticsearch監控(elasticsearch-exporter+prometheus+grafana)

- 08.linux監控(node_exporter+prometheus+grafana)

- 09.micoservice監控

- 10.nacos監控

- 11.druid數據源監控

- 12.prometheus.yml

- 13.grafana告警

- 14.Alertmanager告警

- 15.監控微信告警

- 16.關于接口監控告警

- 17.prometheus-HA架構

- 18.總結

- 13.統一日志中心

- 01.統一日志中心建設意義

- 02.通過ELK收集mysql慢查詢日志

- 03.通過elk收集微服務模塊日志

- 04.通過elk收集nginx日志

- 05.統一日志中心性能優化

- 06.kibana安裝部署

- 07.日志清理方案

- 08.日志性能測試指標

- 09.總結

- 14.數據查詢平臺

- 01.數據查詢平臺架構

- 02.mysql配置bin-log

- 03.單節點canal-server

- 04.canal-ha部署

- 05.canal-kafka部署

- 06.實時增量數據同步mysql

- 07.canal監控

- 08.clickhouse運維常見腳本

- 15.APM監控

- 1.Elastic APM

- 2.Skywalking

- 01.docker部署es

- 02.部署skywalking-server

- 03.部署skywalking-agent

- 16.壓力測試

- 1.ocp.jmx

- 2.test.bat

- 3.壓測腳本

- 4.壓力報告

- 5.報告分析

- 6.壓測平臺

- 7.并發測試

- 8.wrk工具

- 9.nmon

- 10.jmh測試

- 17.SQL優化

- 1.oracle篇

- 01.基線測試

- 02.調優前奏

- 03.線上瓶頸定位

- 04.執行計劃解讀

- 05.高級SQL語句

- 06.SQL tuning

- 07.數據恢復

- 08.深入10053事件

- 09.深入10046事件

- 2.mysql篇

- 01.innodb存儲引擎

- 02.BTree索引

- 03.執行計劃

- 04.查詢優化案例分析

- 05.為什么會走錯索引

- 06.表連接優化問題

- 07.Connection連接參數

- 08.Centos7系統參數調優

- 09.mysql監控

- 10.高級SQL語句

- 11.常用維護腳本

- 12.percona-toolkit

- 18.redis高可用方案

- 1.免密登錄

- 2.安裝部署

- 3.配置文件

- 4.啟動腳本

- 19.消息中間件搭建

- 19-01.rabbitmq集群搭建

- 01.rabbitmq01

- 02.rabbitmq02

- 03.rabbitmq03

- 04.鏡像隊列

- 05.haproxy搭建

- 06.keepalived

- 19-02.rocketmq搭建

- 19-03.kafka集群

- 20.mysql高可用方案

- 1.環境

- 2.mysql部署

- 3.Xtrabackup部署

- 4.Galera部署

- 5.galera for mysql 集群

- 6.haproxy+keepalived部署

- 21.es集群部署

- 22.生產實施優化

- 1.linux優化

- 2.jvm優化

- 3.feign優化

- 4.zuul性能優化

- 23.線上問題診斷

- 01.CPU性能評估工具

- 02.內存性能評估工具

- 03.IO性能評估工具

- 04.網絡問題工具

- 05.綜合診斷評估工具

- 06.案例診斷01

- 07.案例診斷02

- 08.案例診斷03

- 09.案例診斷04

- 10.遠程debug

- 24.fiddler抓包實戰

- 01.fiddler介紹

- 02.web端抓包

- 03.app抓包

- 25.疑難解答交流

- 01.有了auth/token獲取token了為啥還要配置security的登錄配置

- 02.權限數據存放在redis嗎,代碼在哪里啊

- 03.其他微服務和認證中心的關系

- 04.改包問題

- 05.use RequestContextListener or RequestContextFilter to expose the current request

- 06./oauth/token對應代碼在哪里

- 07.驗證碼出不來

- 08./user/login

- 09.oauth無法自定義權限表達式

- 10.sleuth引發線程數過高問題

- 11.elk中使用7x版本問題

- 12.RedisCommandTimeoutException問題

- 13./oauth/token CPU過高

- 14.feign與權限標識符問題

- 15.動態路由RedisCommandInterruptedException: Command interrupted

- 26.學習資料

- 海量學習資料等你來拿

- 27.持續集成

- 01.git安裝

- 02.代碼倉庫gitlab

- 03.代碼倉庫gogs

- 04.jdk&&maven

- 05.nexus安裝

- 06.sonarqube

- 07.jenkins

- 28.Rancher部署

- 1.rancher-agent部署

- 2.rancher-server部署

- 3.ocp后端部署

- 4.演示前端部署

- 5.elk部署

- 6.docker私服搭建

- 7.rancher-server私服

- 8.rancher-agent docker私服

- 29.K8S部署OCP

- 01.準備OCP的構建環境和部署環境

- 02.部署順序

- 03.在K8S上部署eureka-server

- 04.在K8S上部署mysql

- 05.在K8S上部署redis

- 06.在K8S上部署auth-server

- 07.在K8S上部署user-center

- 08.在K8S上部署api-gateway

- 09.在K8S上部署back-center

- 30.Spring Cloud Alibaba

- 01.統一的依賴管理

- 02.nacos-server

- 03.生產可用的Nacos集群

- 04.nacos配置中心

- 05.common.yaml

- 06.user-center

- 07.auth-server

- 08.api-gateway

- 09.log-center

- 10.file-center

- 11.back-center

- 12.sentinel-dashboard

- 12.01.sentinel流控規則

- 12.02.sentinel熔斷降級規則

- 12.03.sentinel熱點規則

- 12.04.sentinel系統規則

- 12.05.sentinel規則持久化

- 12.06.sentinel總結

- 13.sentinel整合openfeign

- 14.sentinel整合網關

- 1.sentinel整合zuul

- 2.sentinel整合scg

- 15.Dubbo與Nacos共存

- 31.Java源碼剖析

- 01.基礎數據類型和String

- 02.Arrays工具類

- 03.ArrayList源碼分析

- 32.面試專題匯總

- 01.JVM專題匯總

- 02.多線程專題匯總

- 03.Spring專題匯總

- 04.springboot專題匯總

- 05.springcloud面試匯總

- 文檔問題跟蹤處理