| 服務器 | hostname | 軟件 |

| --- | --- |--- |

| 192.168.235.127| demo01 | java,zk,kafka|

| 192.168.235.128| demo02 | java,zk,kafka|

| 192.168.235.129| demo03 | java,zk,kafka |

# zk

## 配置 /etc/hosts

* 192.168.235.127

```

[root@demo01 ~]# vi /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.235.127 demo01

192.168.235.128 demo02

192.168.235.129 demo03

192.168.235.127 zookeeper01

192.168.235.128 zookeeper02

192.168.235.129 zookeeper03

```

* 192.168.235.128

```

[root@demo02 ~]# vi /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.235.127 demo01

192.168.235.128 demo02

192.168.235.129 demo03

192.168.235.127 zookeeper01

192.168.235.128 zookeeper02

192.168.235.129 zookeeper03

```

* 192.168.235.129

```

[root@demo03 ~]# vi /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.235.127 demo01

192.168.235.128 demo02

192.168.235.129 demo03

192.168.235.127 zookeeper01

192.168.235.128 zookeeper02

192.168.235.129 zookeeper03

```

## 創建軟件目錄

192.168.235.127,192.168.235.128,192.168.235.128 分別創建目錄,解決zk軟件包

```

mkdir -p /root/zookeeper

tar -zxvf zookeeper-3.4.8.tar.gz -C /root/zookeeper

```

## 配置myid

* 192.168.235.127

```

mkdir -p /root/kafka/data/zookeeper

[root@demo01 zookeeper]# vi myid

1

```

* 192.168.235.128

```

mkdir -p /root/kafka/data/zookeeper

[root@demo02 zookeeper]# vi myid

2

```

* 192.168.235.129

```

mkdir -p /root/kafka/data/zookeeper

[root@demo03 zookeeper]# vi myid

3

```

## 配置zoo.cfg

```

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/root/kafka/data/zookeeper

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

server.1=zookeeper01:2888:3888

server.2=zookeeper02:2888:3888

server.3=zookeeper03:2888:3888

```

## 啟動

192.168.235.127

```

[root@demo01 zookeeper]# cd /root/zookeeper && bin/zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /root/zookeeper/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

```

192.168.235.128

```

[root@demo02 zookeeper]# cd /root/zookeeper && bin/zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /root/zookeeper/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

```

192.168.235.129

```

[root@demo03 zookeeper]# cd /root/zookeeper && bin/zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /root/zookeeper/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@demo03 zookeeper]#

```

## 查看

```

[root@demo01 zookeeper]# cd /root/zookeeper && bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /root/zookeeper/bin/../conf/zoo.cfg

Mode: follower

[root@demo02 zookeeper]# cd /root/zookeeper && bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /root/zookeeper/bin/../conf/zoo.cfg

Mode: follower

[root@demo03 zookeeper]# cd /root/zookeeper && bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /root/zookeeper/bin/../conf/zoo.cfg

Mode: leader

```

## 停止

```

[root@demo03 zookeeper]# cd /root/zookeeper && bin/zkServer.sh stop

```

# kafka

## 下載軟件

```

mkdir /root/kafka

tar – zxvf kafka_2.11-0.11.0.0.tgz -C /root/kafka

mkdir -p /root/kafka/data/kafka-logs

```

## 配置 /root/kafka/config/server.properties

* 192.168.235.127

```

[root@demo01 config]# vi /root/kafka/config/server.properties

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# see kafka.server.KafkaConfig for additional details and defaults

############################# Server Basics #############################

# The id of the broker. This must be set to a unique integer for each broker.

broker.id=1

# Switch to enable topic deletion or not, default value is false

#delete.topic.enable=true

############################# Socket Server Settings #############################

# The address the socket server listens on. It will get the value returned from

# java.net.InetAddress.getCanonicalHostName() if not configured.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://zookeeper01:9092

# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000

# A comma seperated list of directories under which to store log files

log.dirs=/root/kafka/data/kafka-logs

############################# Log Retention Policy #############################

# The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.

# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168

# A size-based retention policy for logs. Segments are pruned from the log as long as the remaining

# segments don't drop below log.retention.bytes. Functions independently of log.retention.hours.

#log.retention.bytes=1073741824

# The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824

# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000

############################# Zookeeper #############################

# Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=zookeeper01:2181,zookeeper02:2181,zookeeper03:2181

# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=6000

############################# Group Coordinator Settings #############################

# The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

# The default value for this is 3 seconds.

# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0

```

* 192.168.235.128

```

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# see kafka.server.KafkaConfig for additional details and defaults

############################# Server Basics #############################

# The id of the broker. This must be set to a unique integer for each broker.

broker.id=2

# Switch to enable topic deletion or not, default value is false

#delete.topic.enable=true

############################# Socket Server Settings #############################

# The address the socket server listens on. It will get the value returned from

# java.net.InetAddress.getCanonicalHostName() if not configured.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://zookeeper02:9092

# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000

# A comma seperated list of directories under which to store log files

log.dirs=/root/kafka/data/kafka-logs

############################# Log Retention Policy #############################

# The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.

# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168

# A size-based retention policy for logs. Segments are pruned from the log as long as the remaining

# segments don't drop below log.retention.bytes. Functions independently of log.retention.hours.

#log.retention.bytes=1073741824

# The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824

# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000

############################# Zookeeper #############################

# Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=zookeeper01:2181,zookeeper02:2181,zookeeper03:2181

# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=6000

############################# Group Coordinator Settings #############################

# The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

# The default value for this is 3 seconds.

# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0

```

* 192.168.235.129

```

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# see kafka.server.KafkaConfig for additional details and defaults

############################# Server Basics #############################

# The id of the broker. This must be set to a unique integer for each broker.

broker.id=3

# Switch to enable topic deletion or not, default value is false

#delete.topic.enable=true

############################# Socket Server Settings #############################

# The address the socket server listens on. It will get the value returned from

# java.net.InetAddress.getCanonicalHostName() if not configured.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://zookeeper03:9092

# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000

# A comma seperated list of directories under which to store log files

log.dirs=/root/kafka/data/kafka-logs

############################# Log Retention Policy #############################

# The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.

# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168

# A size-based retention policy for logs. Segments are pruned from the log as long as the remaining

# segments don't drop below log.retention.bytes. Functions independently of log.retention.hours.

#log.retention.bytes=1073741824

# The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824

# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000

############################# Zookeeper #############################

# Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=zookeeper01:2181,zookeeper02:2181,zookeeper03:2181

# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=6000

############################# Group Coordinator Settings #############################

# The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

# The default value for this is 3 seconds.

# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0

```

## 配置 meta.properties

* 192.168.235.127

```

[root@demo01 kafka-logs]# vi /root/kafka/data/kafka-logs/meta.properties

version=0

broker.id=1

```

* 192.168.235.128

```

[root@demo02 zookeeper]# vi /root/kafka/data/kafka-logs/meta.properties

version=0

broker.id=2

```

* 192.168.235.129

```

[root@demo03 zookeeper]# vi /root/kafka/data/kafka-logs/meta.properties

version=0

broker.id=3

```

## 啟動

```

[root@demo01 ~]# cd /root/kafka/ && bin/kafka-server-start.sh -daemon config/server.properties &

[1] 1964

[root@demo01 ~]# jps

2254 Jps

1759 QuorumPeerMain

2191 Kafka

[root@demo01 ~]# jps

2264 Jps

1759 QuorumPeerMain

2191 Kafka

```

## 測試

```

[root@demo01 config]# cd /root/kafka/ && bin/kafka-topics.sh --create --zookeeper zookeeper01:2181,zookeeper02:2181,zookeeper03:2181 --replication-factor 3 --partitions 3 --topic owen

Created topic "owen".

[root@demo01 kafka]# bin/kafka-topics.sh --describe --zookeeper zookeeper01:2181,zookeeper02:2181,zookeeper03:2181 --topic owen

Topic:owen PartitionCount:3 ReplicationFactor:3 Configs:

Topic: owen Partition: 0 Leader: 3 Replicas: 3,2,1 Isr: 3

Topic: owen Partition: 1 Leader: 1 Replicas: 1,3,2 Isr: 1,3,2

Topic: owen Partition: 2 Leader: 2 Replicas: 2,1,3 Isr: 2,1,3

```

## 消費消息

```

[root@demo02 bin]# kafka-console-consumer.sh --bootstrap-server zookeeper03:9092,zookeeper03:9092,zookeeper03:9092 --from-beginning --topic example

```

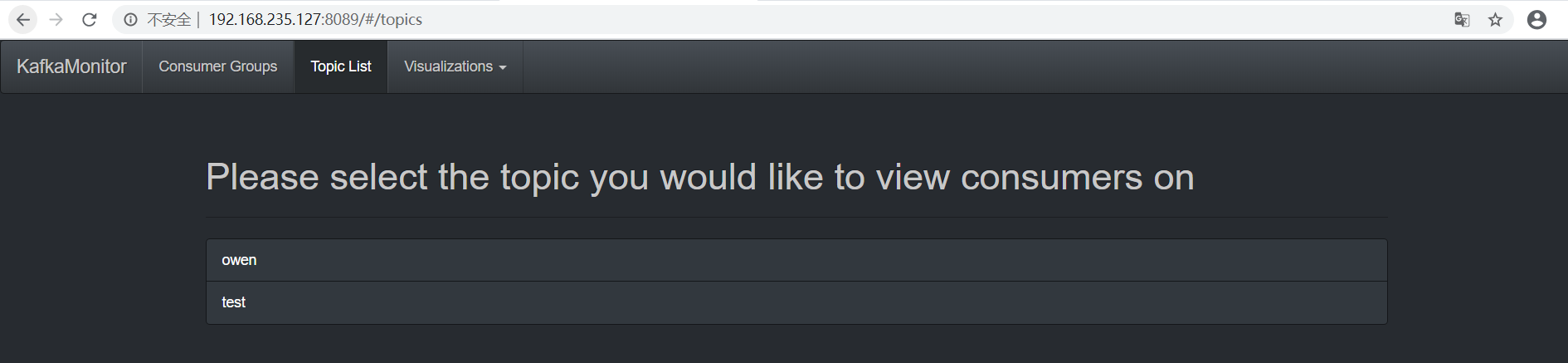

## 監控

KafkaOffsetMonitor-assembly-0.2.0.jar 上傳至/root/kafka/libs

```

[root@demo01 libs]# java -cp ../libs/KafkaOffsetMonitor-assembly-0.2.0.jar com.quantifind.kafka.offsetapp.OffsetGetterWeb --zk zookeeper01:2181,zookeeper02:2181,zookeeper03:2181 --port 8089 --refresh 10.seconds --retain 2.days

serving resources from: jar:file:/root/kafka/libs/KafkaOffsetMonitor-assembly-0.2.0.jar!/offsetapp

SLF4J: Failed to load class "org.slf4j.impl.StaticLoggerBinder".

SLF4J: Defaulting to no-operation (NOP) logger implementation

SLF4J: See http://www.slf4j.org/codes.html#StaticLoggerBinder for further details.

2020-05-28 13:20:39.506:INFO:oejs.Server:jetty-7.x.y-SNAPSHOT

log4j:WARN No appenders could be found for logger (org.I0Itec.zkclient.ZkConnection).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

```

- 前言

- 1.項目說明

- 2.項目更新日志

- 3.文檔更新日志

- 01.快速開始

- 01.maven構建項目

- 02.環境安裝

- 03.STS項目導入

- 03.IDEA項目導入

- 04.數據初始化

- 05.項目啟動

- 06.付費文檔說明

- 02.總體流程

- 1.oauth接口

- 2.架構設計圖

- 3.微服務介紹

- 4.功能介紹

- 5.梳理流程

- 03.模塊詳解

- 01.老版本1.0.1分支模塊講解

- 01.db-core模塊

- 02.api-commons模塊

- 03.log-core模塊

- 04.security-core模塊

- 05.swagger-core模塊

- 06.eureka-server模塊

- 07.auth-server模塊

- 08.auth-sso模塊解析

- 09.user-center模塊

- 10.api-gateway模塊

- 11.file-center模塊

- 12.log-center模塊

- 13.batch-center模塊

- 14.back-center模塊

- 02.spring-boot-starter-web那點事

- 03.自定義db-spring-boot-starter

- 04.自定義log-spring-boot-starter

- 05.自定義redis-spring-boot-starter

- 06.自定義common-spring-boot-starter

- 07.自定義swagger-spring-boot-starter

- 08.自定義uaa-server-spring-boot-starter

- 09.自定義uaa-client-spring-boot-starter

- 10.自定義ribbon-spring-boot-starter

- 11.springboot啟動原理

- 12.eureka-server模塊

- 13.auth-server模塊

- 14.user-center模塊

- 15.api-gateway模塊

- 16.file-center模塊

- 17.log-center模塊

- 18.back-center模塊

- 19.auth-sso模塊

- 20.admin-server模塊

- 21.zipkin-center模塊

- 22.job-center模塊

- 23.batch-center

- 04.全新網關

- 01.基于spring cloud gateway的new-api-gateway

- 02.spring cloud gateway整合Spring Security Oauth

- 03.基于spring cloud gateway的redis動態路由

- 04.spring cloud gateway聚合swagger文檔

- 05.技術詳解

- 01.互聯網系統設計原則

- 02.系統冪等性設計與實踐

- 03.Oauth最簡向導開發指南

- 04.oauth jdbc持久化策略

- 05.JWT token方式啟用

- 06.token有效期的處理

- 07.@PreAuthorize注解分析

- 08.獲取當前用戶信息

- 09.認證授權白名單配置

- 10.OCP權限設計

- 11.服務安全流程

- 12.認證授權詳解

- 13.驗證碼技術

- 14.短信驗證碼登錄

- 15.動態數據源配置

- 16.分頁插件使用

- 17.緩存擊穿

- 18.分布式主鍵生成策略

- 19.分布式定時任務

- 20.分布式鎖

- 21.網關多維度限流

- 22.跨域處理

- 23.容錯限流

- 24.應用訪問次數控制

- 25.統一業務異常處理

- 26.日志埋點

- 27.GPRC內部通信

- 28.服務間調用

- 29.ribbon負載均衡

- 30.微服務分布式跟蹤

- 31.異步與線程傳遞變量

- 32.死信隊列延時消息

- 33.單元測試用例

- 34.Greenwich.RELEASE升級

- 35.混沌工程質量保證

- 06.開發初探

- 1.開發技巧

- 2.crud例子

- 3.新建服務

- 4.區分前后臺用戶

- 07.分表分庫

- 08.分布式事務

- 1.Seata介紹

- 2.Seata部署

- 09.shell部署

- 01.eureka-server

- 02.user-center

- 03.auth-server

- 04.api-gateway

- 05.file-center

- 06.log-center

- 07.back-center

- 08.編寫shell腳本

- 09.集群shell部署

- 10.集群shell啟動

- 11.部署阿里云問題

- 10.網關安全

- 1.openresty https保障服務安全

- 2.openresty WAF應用防火墻

- 3.openresty 高可用

- 11.docker配置

- 01.docker安裝

- 02.Docker 開啟遠程API

- 03.采用docker方式打包到服務器

- 04.docker創建mysql

- 05.docker網絡原理

- 06.docker實戰

- 6.01.安裝docker

- 6.02.管理鏡像基本命令

- 6.03.容器管理

- 6.04容器數據持久化

- 6.05網絡模式

- 6.06.Dockerfile

- 6.07.harbor部署

- 6.08.使用自定義鏡像

- 12.統一監控中心

- 01.spring boot admin監控

- 02.Arthas診斷利器

- 03.nginx監控(filebeat+es+grafana)

- 04.Prometheus監控

- 05.redis監控(redis+prometheus+grafana)

- 06.mysql監控(mysqld_exporter+prometheus+grafana)

- 07.elasticsearch監控(elasticsearch-exporter+prometheus+grafana)

- 08.linux監控(node_exporter+prometheus+grafana)

- 09.micoservice監控

- 10.nacos監控

- 11.druid數據源監控

- 12.prometheus.yml

- 13.grafana告警

- 14.Alertmanager告警

- 15.監控微信告警

- 16.關于接口監控告警

- 17.prometheus-HA架構

- 18.總結

- 13.統一日志中心

- 01.統一日志中心建設意義

- 02.通過ELK收集mysql慢查詢日志

- 03.通過elk收集微服務模塊日志

- 04.通過elk收集nginx日志

- 05.統一日志中心性能優化

- 06.kibana安裝部署

- 07.日志清理方案

- 08.日志性能測試指標

- 09.總結

- 14.數據查詢平臺

- 01.數據查詢平臺架構

- 02.mysql配置bin-log

- 03.單節點canal-server

- 04.canal-ha部署

- 05.canal-kafka部署

- 06.實時增量數據同步mysql

- 07.canal監控

- 08.clickhouse運維常見腳本

- 15.APM監控

- 1.Elastic APM

- 2.Skywalking

- 01.docker部署es

- 02.部署skywalking-server

- 03.部署skywalking-agent

- 16.壓力測試

- 1.ocp.jmx

- 2.test.bat

- 3.壓測腳本

- 4.壓力報告

- 5.報告分析

- 6.壓測平臺

- 7.并發測試

- 8.wrk工具

- 9.nmon

- 10.jmh測試

- 17.SQL優化

- 1.oracle篇

- 01.基線測試

- 02.調優前奏

- 03.線上瓶頸定位

- 04.執行計劃解讀

- 05.高級SQL語句

- 06.SQL tuning

- 07.數據恢復

- 08.深入10053事件

- 09.深入10046事件

- 2.mysql篇

- 01.innodb存儲引擎

- 02.BTree索引

- 03.執行計劃

- 04.查詢優化案例分析

- 05.為什么會走錯索引

- 06.表連接優化問題

- 07.Connection連接參數

- 08.Centos7系統參數調優

- 09.mysql監控

- 10.高級SQL語句

- 11.常用維護腳本

- 12.percona-toolkit

- 18.redis高可用方案

- 1.免密登錄

- 2.安裝部署

- 3.配置文件

- 4.啟動腳本

- 19.消息中間件搭建

- 19-01.rabbitmq集群搭建

- 01.rabbitmq01

- 02.rabbitmq02

- 03.rabbitmq03

- 04.鏡像隊列

- 05.haproxy搭建

- 06.keepalived

- 19-02.rocketmq搭建

- 19-03.kafka集群

- 20.mysql高可用方案

- 1.環境

- 2.mysql部署

- 3.Xtrabackup部署

- 4.Galera部署

- 5.galera for mysql 集群

- 6.haproxy+keepalived部署

- 21.es集群部署

- 22.生產實施優化

- 1.linux優化

- 2.jvm優化

- 3.feign優化

- 4.zuul性能優化

- 23.線上問題診斷

- 01.CPU性能評估工具

- 02.內存性能評估工具

- 03.IO性能評估工具

- 04.網絡問題工具

- 05.綜合診斷評估工具

- 06.案例診斷01

- 07.案例診斷02

- 08.案例診斷03

- 09.案例診斷04

- 10.遠程debug

- 24.fiddler抓包實戰

- 01.fiddler介紹

- 02.web端抓包

- 03.app抓包

- 25.疑難解答交流

- 01.有了auth/token獲取token了為啥還要配置security的登錄配置

- 02.權限數據存放在redis嗎,代碼在哪里啊

- 03.其他微服務和認證中心的關系

- 04.改包問題

- 05.use RequestContextListener or RequestContextFilter to expose the current request

- 06./oauth/token對應代碼在哪里

- 07.驗證碼出不來

- 08./user/login

- 09.oauth無法自定義權限表達式

- 10.sleuth引發線程數過高問題

- 11.elk中使用7x版本問題

- 12.RedisCommandTimeoutException問題

- 13./oauth/token CPU過高

- 14.feign與權限標識符問題

- 15.動態路由RedisCommandInterruptedException: Command interrupted

- 26.學習資料

- 海量學習資料等你來拿

- 27.持續集成

- 01.git安裝

- 02.代碼倉庫gitlab

- 03.代碼倉庫gogs

- 04.jdk&&maven

- 05.nexus安裝

- 06.sonarqube

- 07.jenkins

- 28.Rancher部署

- 1.rancher-agent部署

- 2.rancher-server部署

- 3.ocp后端部署

- 4.演示前端部署

- 5.elk部署

- 6.docker私服搭建

- 7.rancher-server私服

- 8.rancher-agent docker私服

- 29.K8S部署OCP

- 01.準備OCP的構建環境和部署環境

- 02.部署順序

- 03.在K8S上部署eureka-server

- 04.在K8S上部署mysql

- 05.在K8S上部署redis

- 06.在K8S上部署auth-server

- 07.在K8S上部署user-center

- 08.在K8S上部署api-gateway

- 09.在K8S上部署back-center

- 30.Spring Cloud Alibaba

- 01.統一的依賴管理

- 02.nacos-server

- 03.生產可用的Nacos集群

- 04.nacos配置中心

- 05.common.yaml

- 06.user-center

- 07.auth-server

- 08.api-gateway

- 09.log-center

- 10.file-center

- 11.back-center

- 12.sentinel-dashboard

- 12.01.sentinel流控規則

- 12.02.sentinel熔斷降級規則

- 12.03.sentinel熱點規則

- 12.04.sentinel系統規則

- 12.05.sentinel規則持久化

- 12.06.sentinel總結

- 13.sentinel整合openfeign

- 14.sentinel整合網關

- 1.sentinel整合zuul

- 2.sentinel整合scg

- 15.Dubbo與Nacos共存

- 31.Java源碼剖析

- 01.基礎數據類型和String

- 02.Arrays工具類

- 03.ArrayList源碼分析

- 32.面試專題匯總

- 01.JVM專題匯總

- 02.多線程專題匯總

- 03.Spring專題匯總

- 04.springboot專題匯總

- 05.springcloud面試匯總

- 文檔問題跟蹤處理