不做強制要求,能完成最好。但是要求要明白 NameNode 和 ResourceManager是存在單點故障問題的,解決方式就是 HA(High Available、即高可用性集群)。

[TOC]

# 1. HDFS-HA集群配置

## 1.1 配置HDFS-HA集群

1. 官方地址:http://hadoop.apache.org/

2. HDFS高可用集群規劃,請先搭建好一個Hadoop完全分布式集群(可以未進行namenode格式化)和ZooKeeper完全分布式環境已經安裝完成。

| Hadoop102 | Hadoop103 | Hadoop104 |

| --- | --- | --- |

| NameNode | NameNode | |

| ResourceManager | ResourceManager | |

| ZKFC(zookeeper的一個集群監控工具) | ZKFC | |

| DataNode | DataNode | DataNode |

| JournalNode | JournalNode | JournalNode |

| NodeManager | NodeManager | NodeManager |

| ZooKeeper | ZooKeeper | ZooKeeper |

3. 在hadoop102配置core-site.xml

```xml

<configuration>

<!-- 把兩個NameNode)的地址組裝成一個集群mycluster -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<!-- 指定hadoop運行時產生文件的存儲目錄 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/install/hadoop/data/tmp</value>

</property>

</configuration>

```

4. 在hadoop102配置hdfs-site.xml

```xml

<configuration>

<!-- 完全分布式集群名稱 -->

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<!-- 集群中NameNode節點都有哪些,這里是nn1和nn2 -->

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<!-- nn1的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>hadoop102:9000</value>

</property>

<!-- nn2的RPC通信地址 -->

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>hadoop103:9000</value>

</property>

<!-- nn1的http通信地址 -->

<property>

<name>dfs.namenode.http-address.mycluster.nn1</name>

<value>hadoop102:50070</value>

</property>

<!-- nn2的http通信地址 -->

<property>

<name>dfs.namenode.http-address.mycluster.nn2</name>

<value>hadoop103:50070</value>

</property>

<!-- 指定NameNode元數據在JournalNode上的存放位置 -->

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://hadoop102:8485;hadoop103:8485;hadoop104:8485/mycluster</value>

</property>

<!-- 配置隔離機制,即同一時刻只能有一臺服務器對外響應,多個機制用換行分割,即每個機制占用一行 -->

<property>

<name>dfs.ha.fencing.methods</name>

<value>

sshfence

shell(/bin/true)

</value>

</property>

<!-- 使用隔離機制時需要ssh無秘鑰登錄-->

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/hadoop/.ssh/id_rsa</value>

</property>

<!-- 聲明journalnode服務器存儲目錄-->

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/opt/install/hadoop/data/jn</value>

</property>

<!-- 關閉權限檢查-->

<property>

<name>dfs.permissions.enable</name>

<value>false</value>

</property>

<!-- 訪問代理類:client,mycluster,active配置失敗自動切換實現方式-->

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

</configuration>

```

5. 拷貝配置好的hadoop環境到其他節點。

```xml

scp core-site.xml root@hadoop103:$PWD

scp hdfs-site.xml root@hadoop103:$PWD

```

<br/>

## 1.2 啟動HDFS-HA集群

1. 在各個JournalNode節點上,輸入以下命令啟動journalnode服務:

```sql

$HADOOP_HOME/sbin/hadoop-daemon.sh start journalnode

```

2. 在[nn1]上,對其進行格式化,并啟動(如果之前已經格式化過,此處格式化會導致namenode和datanode VRESION中的clusterID不一致,進而導致datanode無法啟動。解決方案:修改datanode中的clusterID與namenode中的clusterID相同):

```sql

$HADOOP_HOME/bin/hdfs namenode -format

$HADOOP_HOME/sbin/hadoop-daemon.sh start namenode

```

3. 在[nn2]上,同步nn1的元數據信息:

```sql

$HADOOP_HOME/bin/hdfs namenode -bootstrapStandby

```

4. 啟動[nn2]:

```sql

$HADOOP_HOME/sbin/hadoop-daemon.sh start namenode

```

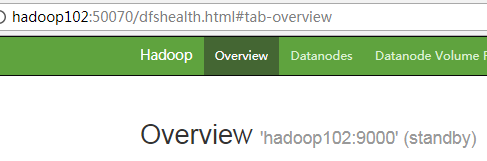

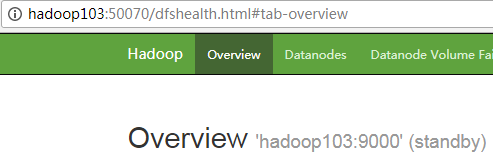

5. 查看web頁面顯示

6. 在[nn1]上,啟動所有datanode

```sql

$HADOOP_HOME/sbin/hadoop-daemons.sh start datanode

```

7. 將[nn1]切換為Active

```sql

$HADOOP_HOME/bin/hdfs haadmin -transitionToActive nn1

```

8. 查看是否Active

```sql

$HADOOP_HOME/bin/hdfs haadmin -getServiceState nn1

```

<br/>

## 1.3 配置HDFS-HA自動故障轉移

1. 具體配置

(1)在hdfs-site.xml中增加

```xml

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

(2)在core-site.xml文件中增加

<property>

<name>ha.zookeeper.quorum</name>

<value>hadoop102:2181,hadoop103:2181,hadoop104:2181</value>

</property>

```

2. 啟動

(1)關閉所有HDFS服務:

```sql

stop-dfs.sh

```

(2)啟動Zookeeper集群:

```sql

zkServer.sh start

```

(3)初始化HA在Zookeeper中狀態:

```sql

hdfs zkfc -formatZK

```

(4)啟動HDFS服務:

```sql

start-dfs.sh

```

(5)在各個NameNode節點上啟動DFSZK Failover Controller,先在哪臺機器啟動,哪個機器的NameNode就是Active NameNode(默認start-dfs.sh已經自動啟動,以下是單獨啟動的命令)

```sql

hadoop-daemon.sh start zkfc

```

3.驗證

(1)將Active NameNode進程kill

```sql

kill -9 namenode的進程id

```

(2)將Active NameNode機器斷開網絡

```sql

service network stop

```

<br/>

# 2. YARN-HA配置

## 2.1 配置YARN-HA集群

1. 規劃集群

| hadoop102 | hadoop103 |

| --- | --- |

| ResourceManager | ResourceManager |

2. 具體配置

(1)yarn-site.xml

```xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!--啟用resourcemanager ha-->

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<!--聲明兩臺resourcemanager的地址-->

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>cluster-yarn1</value>

</property>

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>hadoop102</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>hadoop103</value>

</property>

<!--指定zookeeper集群的地址-->

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>hadoop102:2181,hadoop103:2181,hadoop104:2181</value>

</property>

<!--啟用自動恢復-->

<property>

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<!--指定resourcemanager的狀態信息存儲在zookeeper集群-->

<property>

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

</configuration>

```

(2)同步更新其他節點的yarn-site.xml配置信息

3. 啟動yarn

(1)在hadoop102中執行:

`start-yarn.sh`

(2)在hadoop103中執行:

`yarn-daemon.sh start resourcemanager`

(3)查看服務狀態

`yarn rmadmin -getServiceState rm1`

- Hadoop

- hadoop是什么?

- Hadoop組成

- hadoop官網

- hadoop安裝

- hadoop配置

- 本地運行模式配置

- 偽分布運行模式配置

- 完全分布運行模式配置

- HDFS分布式文件系統

- HDFS架構

- HDFS設計思想

- HDFS組成架構

- HDFS文件塊大小

- HDFS優缺點

- HDFS Shell操作

- HDFS JavaAPI

- 基本使用

- HDFS的I/O 流操作

- 在SpringBoot項目中的API

- HDFS讀寫流程

- HDFS寫流程

- HDFS讀流程

- NN和SNN關系

- NN和SNN工作機制

- Fsimage和 Edits解析

- checkpoint時間設置

- NameNode故障處理

- 集群安全模式

- DataNode工作機制

- 支持的文件格式

- MapReduce分布式計算模型

- MapReduce是什么?

- MapReduce設計思想

- MapReduce優缺點

- MapReduce基本使用

- MapReduce編程規范

- WordCount案例

- MapReduce任務進程

- Hadoop序列化對象

- 為什么要序列化

- 常用數據序列化類型

- 自定義序列化對象

- MapReduce框架原理

- MapReduce工作流程

- MapReduce核心類

- MapTask工作機制

- Shuffle機制

- Partition分區

- Combiner合并

- ReduceTask工作機制

- OutputFormat

- 使用MapReduce實現SQL Join操作

- Reduce join

- Reduce join 代碼實現

- Map join

- Map join 案例實操

- MapReduce 開發總結

- Hadoop 優化

- MapReduce 優化需要考慮的點

- MapReduce 優化方法

- 分布式資源調度框架 Yarn

- Yarn 基本架構

- ResourceManager(RM)

- NodeManager(NM)

- ApplicationMaster

- Container

- 作業提交全過程

- JobHistoryServer 使用

- 資源調度器

- 先進先出調度器(FIFO)

- 容量調度器(Capacity Scheduler)

- 公平調度器(Fair Scheduler)

- Yarn 常用命令

- Zookeeper

- zookeeper是什么?

- zookeeper完全分布式搭建

- Zookeeper特點

- Zookeeper數據結構

- Zookeeper 內部原理

- 選舉機制

- stat 信息中字段解釋

- 選擇機制中的概念

- 選舉消息內容

- 監聽器原理

- Hadoop 高可用集群搭建

- Zookeeper 應用

- Zookeeper Shell操作

- Zookeeper Java應用

- Hive

- Hive是什么?

- Hive的優缺點

- Hive架構

- Hive元數據存儲模式

- 內嵌模式

- 本地模式

- 遠程模式

- Hive環境搭建

- 偽分布式環境搭建

- Hive命令工具

- 命令行模式

- 交互模式

- Hive數據類型

- Hive數據結構

- 參數配置方式

- Hive數據庫

- 數據庫存儲位置

- 數據庫操作

- 表的創建

- 建表基本語法

- 內部表

- 外部表

- 臨時表

- 建表高階語句

- 表的刪除與修改

- 分區表

- 靜態分區

- 動態分區

- 分桶表

- 創建分桶表

- 分桶抽樣

- Hive視圖

- 視圖的創建

- 側視圖Lateral View

- Hive數據導入導出

- 導入數據

- 導出數據

- 查詢表數據量

- Hive事務

- 事務是什么?

- Hive事務的局限性和特點

- Hive事務的開啟和設置

- Hive PLSQL

- Hive高階查詢

- 查詢基本語法

- 基本查詢

- distinct去重

- where語句

- 列正則表達式

- 虛擬列

- CTE查詢

- 嵌套查詢

- join語句

- 內連接

- 左連接

- 右連接

- 全連接

- 多表連接

- 笛卡爾積

- left semi join

- group by分組

- having刷選

- union與union all

- 排序

- order by

- sort by

- distribute by

- cluster by

- 聚合運算

- 基本聚合

- 高級聚合

- 窗口函數

- 序列窗口函數

- 聚合窗口函數

- 分析窗口函數

- 窗口函數練習

- 窗口子句

- Hive函數

- Hive函數分類

- 字符串函數

- 類型轉換函數

- 數學函數

- 日期函數

- 集合函數

- 條件函數

- 聚合函數

- 表生成函數

- 自定義Hive函數

- 自定義函數分類

- 自定義Hive函數流程

- 添加JAR包的方式

- 自定義臨時函數

- 自定義永久函數

- Hive優化

- Hive性能調優工具

- EXPLAIN

- ANALYZE

- Fetch抓取

- 本地模式

- 表的優化

- 小表 join 大表

- 大表 join 大表

- 開啟Map Join

- group by

- count(distinct)

- 笛卡爾積

- 行列過濾

- 動態分區調整

- 分區分桶表

- 數據傾斜

- 數據傾斜原因

- 調整Map數

- 調整Reduce數

- 產生數據傾斜的場景

- 并行執行

- 嚴格模式

- JVM重用

- 推測執行

- 啟用CBO

- 啟動矢量化

- 使用Tez引擎

- 壓縮算法和文件格式

- 文件格式

- 壓縮算法

- Zeppelin

- Zeppelin是什么?

- Zeppelin安裝

- 配置Hive解釋器

- Hbase

- Hbase是什么?

- Hbase環境搭建

- Hbase分布式環境搭建

- Hbase偽分布式環境搭建

- Hbase架構

- Hbase架構組件

- Hbase數據存儲結構

- Hbase原理

- Hbase Shell

- 基本操作

- 表操作

- namespace

- Hbase Java Api

- Phoenix集成Hbase

- Phoenix是什么?

- 安裝Phoenix

- Phoenix數據類型

- Phoenix Shell

- HBase與Hive集成

- HBase與Hive的對比

- HBase與Hive集成使用

- Hbase與Hive集成原理

- HBase優化

- RowKey設計

- 內存優化

- 基礎優化

- Hbase管理

- 權限管理

- Region管理

- Region的自動拆分

- Region的預拆分

- 到底采用哪種拆分策略?

- Region的合并

- HFile的合并

- 為什么要有HFile的合并

- HFile合并方式

- Compaction執行時間

- Compaction相關控制參數

- 演示示例

- Sqoop

- Sqoop是什么?

- Sqoop環境搭建

- RDBMS導入到HDFS

- RDBMS導入到Hive

- RDBMS導入到Hbase

- HDFS導出到RDBMS

- 使用sqoop腳本

- Sqoop常用命令

- Hadoop數據模型

- TextFile

- SequenceFile

- Avro

- Parquet

- RC&ORC

- 文件存儲格式比較

- Spark

- Spark是什么?

- Spark優勢

- Spark與MapReduce比較

- Spark技術棧

- Spark安裝

- Spark Shell

- Spark架構

- Spark編程入口

- 編程入口API

- SparkContext

- SparkSession

- Spark的maven依賴

- Spark RDD編程

- Spark核心數據結構-RDD

- RDD 概念

- RDD 特性

- RDD編程

- RDD編程流程

- pom依賴

- 創建算子

- 轉換算子

- 動作算子

- 持久化算子

- RDD 與閉包

- csv/json數據源

- Spark分布式計算原理

- RDD依賴

- RDD轉換

- RDD依賴

- DAG工作原理

- Spark Shuffle原理

- Shuffle的作用

- ShuffleManager組件

- Shuffle實踐

- RDD持久化

- 緩存機制

- 檢查點

- 檢查點與緩存的區別

- RDD共享變量

- 廣播變量

- 累計器

- RDD分區設計

- 數據傾斜

- 數據傾斜的根本原因

- 定位導致的數據傾斜

- 常見數據傾斜解決方案

- Spark SQL

- SQL on Hadoop

- Spark SQL是什么

- Spark SQL特點

- Spark SQL架構

- Spark SQL運行原理

- Spark SQL編程

- Spark SQL編程入口

- 創建Dataset

- Dataset是什么

- SparkSession創建Dataset

- 樣例類創建Dataset

- 創建DataFrame

- DataFrame是什么

- 結構化數據文件創建DataFrame

- RDD創建DataFrame

- Hive表創建DataFrame

- JDBC創建DataFrame

- SparkSession創建

- RDD、DataFrame、Dataset

- 三者對比

- 三者相互轉換

- RDD轉換為DataFrame

- DataFrame轉換為RDD

- DataFrame API

- DataFrame API分類

- Action 操作

- 基礎 Dataset 函數

- 強類型轉換

- 弱類型轉換

- Spark SQL外部數據源

- Parquet文件

- Hive表

- RDBMS表

- JSON/CSV

- Spark SQL函數

- Spark SQL內置函數

- 自定SparkSQL函數

- Spark SQL CLI

- Spark SQL性能優化

- Spark GraphX圖形數據分析

- 為什么需要圖計算

- 圖的概念

- 圖的術語

- 圖的經典表示法

- Spark Graphix簡介

- Graphx核心抽象

- Graphx Scala API

- 核心組件

- 屬性圖應用示例1

- 屬性圖應用示例2

- 查看圖信息

- 圖的算子

- 連通分量

- PageRank算法

- Pregel分布式計算框架

- Flume日志收集

- Flume是什么?

- Flume官方文檔

- Flume架構

- Flume安裝

- Flume使用過程

- Flume組件

- Flume工作流程

- Flume事務

- Source、Channel、Sink文檔

- Source文檔

- Channel文檔

- Sink文檔

- Flume攔截器

- Flume攔截器概念

- 配置攔截器

- 自定義攔截器

- Flume可靠性保證

- 故障轉移

- 負載均衡

- 多層代理

- 多路復用

- Kafka

- 消息中間件MQ

- Kafka是什么?

- Kafka安裝

- Kafka本地單機部署

- Kafka基本命令使用

- Topic的生產與消費

- 基本命令

- 查看kafka目錄

- Kafka架構

- Kafka Topic

- Kafka Producer

- Kafka Consumer

- Kafka Partition

- Kafka Message

- Kafka Broker

- 存儲策略

- ZooKeeper在Kafka中的作用

- 副本同步

- 容災

- 高吞吐

- Leader均衡機制

- Kafka Scala API

- Producer API

- Consumer API

- Kafka優化

- 消費者參數優化

- 生產者參數優化

- Spark Streaming

- 什么是流?

- 批處理和流處理

- Spark Streaming簡介

- 流數據處理架構

- 內部工作流程

- StreamingContext組件

- SparkStreaming的編程入口

- WordCount案例

- DStream

- DStream是什么?

- Input DStream與Receivers接收器

- DStream API

- 轉換操作

- 輸出操作

- 數據源

- 數據源分類

- Socket數據源

- 統計HDFS文件的詞頻

- 處理狀態數據

- SparkStreaming整合SparkSQL

- SparkStreaming整合Flume

- SparkStreaming整合Kafka

- 自定義數據源

- Spark Streaming優化策略

- 優化運行時間

- 優化內存使用

- 數據倉庫

- 數據倉庫是什么?

- 數據倉庫的意義

- 數據倉庫和數據庫的區別

- OLTP和OLAP的區別

- OLTP的特點

- OLAP的特點

- OLTP與OLAP對比

- 數據倉庫架構

- Inmon架構

- Kimball架構

- 混合型架構

- 數據倉庫的解決方案

- 數據ETL

- 數據倉庫建模流程

- 維度模型

- 星型模式

- 雪花模型

- 星座模型

- 數據ETL處理

- 數倉分層術語

- 數據抽取方式

- CDC抽取方案

- 數據轉換

- 常見的ETL工具