# 實現彈性網絡回歸

彈性網絡回歸是一種回歸類型,通過將 L1 和 L2 正則化項添加到損失函數,將套索回歸與嶺回歸相結合。

## 做好準備

在前兩個秘籍之后實現彈性網絡回歸應該是直截了當的,因此我們將在虹膜數據集上的多元線性回歸中實現這一點,而不是像以前那樣堅持二維數據。我們將使用花瓣長度,花瓣寬度和萼片寬度來預測萼片長度。

## 操作步驟

我們按如下方式處理秘籍:

1. 首先,我們加載必要的庫并初始化圖,如下所示:

```py

import matplotlib.pyplot as plt

import numpy as np

import tensorflow as tf

from sklearn import datasets

sess = tf.Session()

```

1. 現在,我們加載數據。這次,`x`數據的每個元素將是三個值的列表而不是一個。使用以下代碼:

```py

iris = datasets.load_iris()

x_vals = np.array([[x[1], x[2], x[3]] for x in iris.data])

y_vals = np.array([y[0] for y in iris.data])

```

1. 接下來,我們聲明批量大小,占位符,變量和模型輸出。這里唯一的區別是我們更改`x`數據占位符的大小規范,取三個值而不是一個,如下所示:

```py

batch_size = 50

learning_rate = 0.001

x_data = tf.placeholder(shape=[None, 3], dtype=tf.float32)

y_target = tf.placeholder(shape=[None, 1], dtype=tf.float32)

A = tf.Variable(tf.random_normal(shape=[3,1]))

b = tf.Variable(tf.random_normal(shape=[1,1]))

model_output = tf.add(tf.matmul(x_data, A), b)

```

1. 對于彈性網絡,損失函數具有部分斜率的 L1 和 L2 范數。我們創建這些術語,然后將它們添加到損失函數中,如下所示:

```py

elastic_param1 = tf.constant(1.)

elastic_param2 = tf.constant(1.)

l1_a_loss = tf.reduce_mean(tf.abs(A))

l2_a_loss = tf.reduce_mean(tf.square(A))

e1_term = tf.multiply(elastic_param1, l1_a_loss)

e2_term = tf.multiply(elastic_param2, l2_a_loss)

loss = tf.expand_dims(tf.add(tf.add(tf.reduce_mean(tf.square(y_target - model_output)), e1_term), e2_term), 0)

```

1. 現在,我們可以初始化變量,聲明我們的優化函數,運行訓練循環,并擬合我們的系數,如下所示:

```py

init = tf.global_variables_initializer()

sess.run(init)

my_opt = tf.train.GradientDescentOptimizer(learning_rate)

train_step = my_opt.minimize(loss)

loss_vec = []

for i in range(1000):

rand_index = np.random.choice(len(x_vals), size=batch_size)

rand_x = x_vals[rand_index]

rand_y = np.transpose([y_vals[rand_index]])

sess.run(train_step, feed_dict={x_data: rand_x, y_target: rand_y})

temp_loss = sess.run(loss, feed_dict={x_data: rand_x, y_target: rand_y})

loss_vec.append(temp_loss[0])

if (i+1)%250==0:

print('Step #' + str(i+1) + ' A = ' + str(sess.run(A)) + ' b = ' + str(sess.run(b)))

print('Loss = ' + str(temp_loss))

```

1. 這是代碼的輸出:

```py

Step #250 A = [[ 0.42095602]

[ 0.1055888 ]

[ 1.77064979]] b = [[ 1.76164341]]

Loss = [ 2.87764359]

Step #500 A = [[ 0.62762028]

[ 0.06065864]

[ 1.36294949]] b = [[ 1.87629771]]

Loss = [ 1.8032167]

Step #750 A = [[ 0.67953539]

[ 0.102514 ]

[ 1.06914485]] b = [[ 1.95604002]]

Loss = [ 1.33256555]

Step #1000 A = [[ 0.6777274 ]

[ 0.16535147]

[ 0.8403284 ]] b = [[ 2.02246833]]

Loss = [ 1.21458709]

```

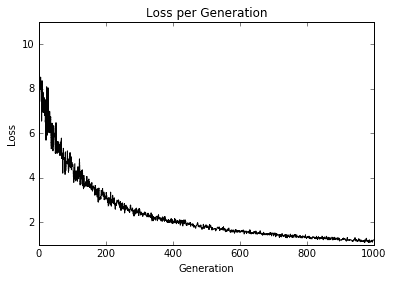

1. 現在,我們可以觀察訓練迭代的損失,以確保算法收斂,如下所示:

```py

plt.plot(loss_vec, 'k-')

plt.title('Loss per Generation')

plt.xlabel('Generation')

plt.ylabel('Loss')

plt.show()

```

我們得到上面代碼的以下圖:

圖 10:在 1,000 次訓練迭代中繪制的彈性凈回歸損失

## 工作原理

這里實現彈性網絡回歸以及多元線性回歸。我們可以看到,利用損失函數中的這些正則化項,收斂速度比先前的秘籍慢。正則化就像在損失函數中添加適當的術語一樣簡單。

- TensorFlow 入門

- 介紹

- TensorFlow 如何工作

- 聲明變量和張量

- 使用占位符和變量

- 使用矩陣

- 聲明操作符

- 實現激活函數

- 使用數據源

- 其他資源

- TensorFlow 的方式

- 介紹

- 計算圖中的操作

- 對嵌套操作分層

- 使用多個層

- 實現損失函數

- 實現反向傳播

- 使用批量和隨機訓練

- 把所有東西結合在一起

- 評估模型

- 線性回歸

- 介紹

- 使用矩陣逆方法

- 實現分解方法

- 學習 TensorFlow 線性回歸方法

- 理解線性回歸中的損失函數

- 實現 deming 回歸

- 實現套索和嶺回歸

- 實現彈性網絡回歸

- 實現邏輯回歸

- 支持向量機

- 介紹

- 使用線性 SVM

- 簡化為線性回歸

- 在 TensorFlow 中使用內核

- 實現非線性 SVM

- 實現多類 SVM

- 最近鄰方法

- 介紹

- 使用最近鄰

- 使用基于文本的距離

- 使用混合距離函數的計算

- 使用地址匹配的示例

- 使用最近鄰進行圖像識別

- 神經網絡

- 介紹

- 實現操作門

- 使用門和激活函數

- 實現單層神經網絡

- 實現不同的層

- 使用多層神經網絡

- 改進線性模型的預測

- 學習玩井字棋

- 自然語言處理

- 介紹

- 使用詞袋嵌入

- 實現 TF-IDF

- 使用 Skip-Gram 嵌入

- 使用 CBOW 嵌入

- 使用 word2vec 進行預測

- 使用 doc2vec 進行情緒分析

- 卷積神經網絡

- 介紹

- 實現簡單的 CNN

- 實現先進的 CNN

- 重新訓練現有的 CNN 模型

- 應用 StyleNet 和 NeuralStyle 項目

- 實現 DeepDream

- 循環神經網絡

- 介紹

- 為垃圾郵件預測實現 RNN

- 實現 LSTM 模型

- 堆疊多個 LSTM 層

- 創建序列到序列模型

- 訓練 Siamese RNN 相似性度量

- 將 TensorFlow 投入生產

- 介紹

- 實現單元測試

- 使用多個執行程序

- 并行化 TensorFlow

- 將 TensorFlow 投入生產

- 生產環境 TensorFlow 的一個例子

- 使用 TensorFlow 服務

- 更多 TensorFlow

- 介紹

- 可視化 TensorBoard 中的圖

- 使用遺傳算法

- 使用 k 均值聚類

- 求解常微分方程組

- 使用隨機森林

- 使用 TensorFlow 和 Keras